The Weekly Byte: The Agentic Coding Wars Heat Up

OpenAI's Codex takes direct aim at Claude Code, Anthropic fires back with Opus 4.7, Google reshapes Chrome, and AI agent observability has a breakout week.

Hey folks, welcome back to The Weekly Byte! This week the AI coding tools slugged it out in public, Google turned Chrome into a research cockpit, and a bunch of quiet but important plumbing news dropped for anyone building with AI agents.

🔥 Lead Story

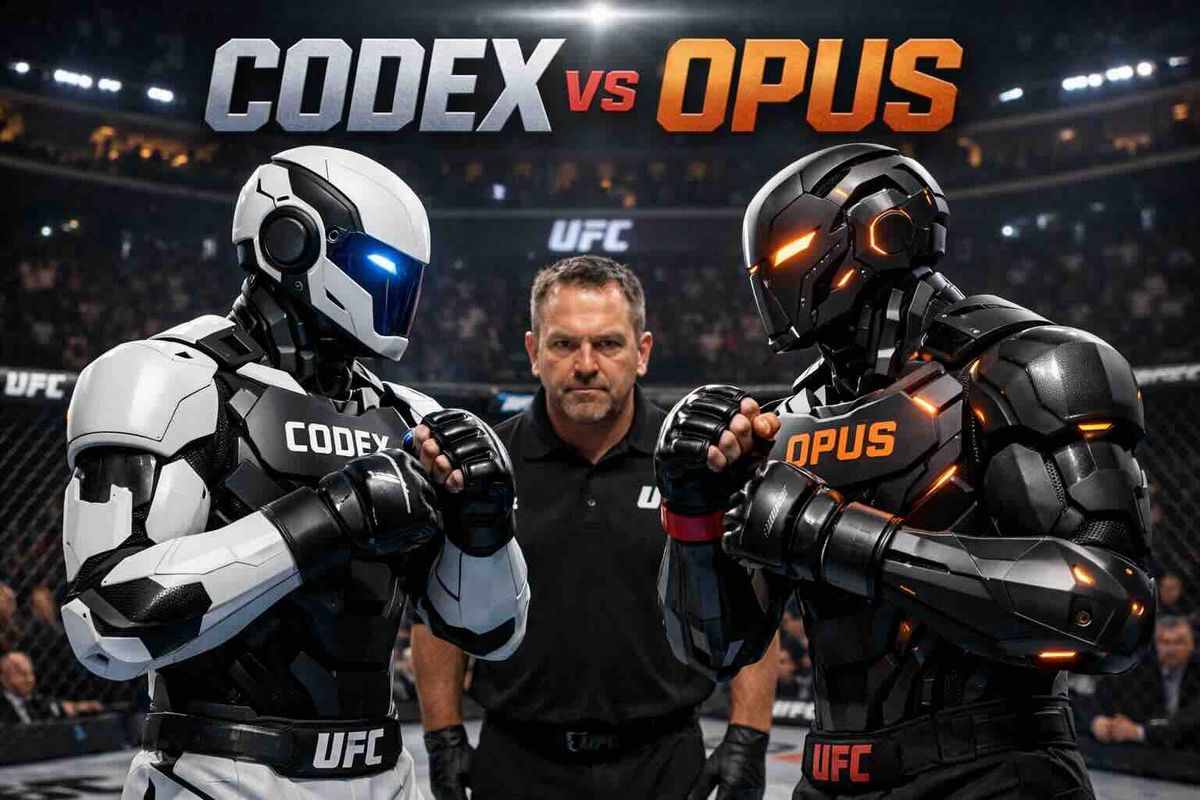

The biggest story of the week is a collision. OpenAI shipped a major Codex update that lets its coding agent drive your actual macOS apps, generate images, remember across sessions, and run plugins. Hours later, Anthropic answered with Claude Opus 4.7, its most capable generally available model, tuned for the same market. Codex is the direct shot at Claude Code everyone has been expecting, and Opus 4.7 is Anthropic's reply.

For the non-developers, think of it like two rival power tools fighting for a spot on your workbench. Both are racing past the chat box toward something that feels like a teammate: clicking around your apps the way a junior engineer would, remembering what you worked on yesterday, reading a screenshot and acting on it. Which is ahead this week matters less than the fact that the category itself just redefined what an AI coding tool even is.

Why it matters: If your team has not picked a lane yet, this is the week to start running pilots. The capability gap between "toy" and "useful" just shrank to roughly zero, and the winner for your workflow is the one your engineers actually adopt, not the one with the flashier benchmark.

📰 Top Stories

1. OpenAI's big Codex update is a direct shot at Claude Code

Codex can now control your desktop apps, generate images inline, remember past sessions, and run plugins. The Verge's headline says it out loud: this is OpenAI trying to take back the agentic coding crown after Claude Code quietly ate its lunch.

Why it matters: Agentic coding tools have gone from novelty to line item. Expect procurement conversations about which one your org standardizes on.

2. Claude Opus 4.7 arrives with better vision, memory, and instruction-following

Anthropic's new flagship focuses on the unglamorous stuff: following complex instructions without going off the rails, reading screenshots properly, and holding context across longer tasks. Notably, Anthropic's own "Mythos Preview" scored higher on every eval, which hints at what is coming next.

Why it matters: Instruction-following and vision are exactly the pain points teams hit when they try to run AI in real workflows. "Boring" improvements here translate directly into fewer 2am fix-ups.

3. Google's AI Mode now opens sources side-by-side in Chrome

Click a citation and the page slides in next to your chat, so you can ask follow-up questions without losing your place. AI Mode can also now search across your open tabs. It is a small UI change that fundamentally alters how research feels.

Why it matters: The browser is quietly becoming the real AI interface. If you build docs, dashboards, or internal tools, assume users will soon be reading them inside a chat pane.

4. Google launches a Gemini app on Mac

A native Mac app with Option+Space to summon a floating Gemini bubble that can see whatever window you share. It is Google's clearest push yet onto Apple's desktop turf.

Why it matters: The "keyboard shortcut to an AI" is becoming a standard OS-level pattern. If your product does not have one, you are building for yesterday's desktop.

5. OpenAI introduces GPT-Rosalind for life sciences

Named for Rosalind Franklin, this is a reasoning model tuned for drug discovery, genomics, and protein work. OpenAI is following the same playbook Google used with AlphaFold: bring a purpose-built model to a hard scientific domain.

Why it matters: Vertical-specific frontier models are the next wave. Expect "GPT-for-your-industry" announcements to pile up over the next year.

6. OpenAI opens Trusted Access for Cyber with GPT-5.4-Cyber and $10M in grants

A new tier of API access for vetted security firms, plus a cyber-tuned model and grant funding aimed at defenders specifically. The framing: AI can harden the good guys faster than the bad guys, if the good guys get first dibs.

Why it matters: Gated, safety-reviewed model access is how regulators will expect frontier capabilities to be distributed. This is the template.

7. Cyberscammers are bypassing bank security with off-the-shelf Telegram tools

MIT Tech Review documents fraud operations in Cambodia defeating bank "liveness" checks (the video selfie step that is supposed to prove you are a real person) using cheap tools sold on Telegram. The attack only takes about 90 seconds.

Why it matters: Identity verification built on "can a camera see a face" is officially broken. If your product relies on liveness checks, it is time to stack another layer.

8. InsightFinder raises $15M to debug AI agents in production

CEO Helen Gu nails the problem: monitoring the model is not enough anymore, you have to monitor the whole stack now that AI is inside it. That is observability for AI agents, and it is a real category.

Why it matters: If your team is shipping agent features, your existing APM and logging setup probably does not see half of what is happening. That gap is where the next generation of incidents will live.

Enjoying The Weekly Byte?

Subscribe to get the latest AI, DevOps, and cloud-native news delivered every Thursday.

Subscribe Free🛠️ Tool of the Week

HoloTab from Hugging Face is a browser-based "computer use" agent that drives web apps the way a person would, clicking buttons and filling forms, without needing a formal API for every tool. Think of it as a way to automate the messy corners of the web where no proper integration exists. Open and browser-native, which makes it a nice sandbox to experiment with computer-use agents without installing a desktop runtime.

💡 Quick Takes

- Allbirds pivots to AI, stock jumps 600%. A shoe company renamed itself into the AI hype cycle and the market ate it up. We are deep into "dot com 2.0" territory.

- OpenAI's Agents SDK gets a native sandbox. The new version ships a built-in sandbox and a model-native harness, so long-running agents have somewhere safe to execute code. This is the sort of plumbing that makes production agents actually workable.

- Spotify goes "agentic-first" internally. Their engineering org is redesigning internal developer platforms so AI agents and humans use the same tools. If it is good for engineers, it had better be good for agents too.

- AI shopping traffic is real money now. Adobe data shows AI-driven traffic to US retailers jumped 393% in Q1, and those visitors convert better than organic. Retail SEO teams, your job description is changing.

- Meta Quest 3 prices jump because of RAM shortages. Quest 3 goes up $100. The AI datacenter memory crunch is now touching consumer electronics. Expect more of this.

📊 Numbers That Matter

| Metric | Value | Context |

|---|---|---|

| AI traffic to US retailers (Q1) | +393% | And it converts better than regular traffic |

| Allbirds stock pop on AI pivot | +600% | A shoe brand; the assets sold for $39M |

| Public sector leaders worried about AI data security | 79% | Capgemini survey of global execs |

| OpenAI cyber defense grant pool | $10M | API credits for vetted security firms |

| Time to bypass a bank liveness check | ~90 sec | Using tools sold openly on Telegram |

🎯 Brian's Take

Three things happened at once this week, and together they tell you where we actually are. OpenAI shipped a Codex that can drive your desktop. Anthropic shipped an Opus that is better at the unsexy parts (vision, memory, following instructions). And a small company called InsightFinder raised $15M to watch what AI agents do when nobody is looking. That last one matters just as much as the first two.

We have spent two years obsessing over which model is smartest. The actual work in front of teams now is different: how do I give this thing access to my systems without it wrecking something, and how do I find out quickly when it does? The Spotify story in Quick Takes is telling the same thing from another angle. They are rebuilding their internal developer platform so AI agents and human engineers share the same paved roads, the same guardrails, the same logs. That is not a model problem. That is a platform engineering problem.

My bet for the next 12 months: the companies that get ahead are not the ones running the biggest model. They are the ones who put in the boring infrastructure (permissions, audit logs, sandboxes, observability) so an agent can do real work without becoming a liability. The hype is on the chat windows. The money is going to be in the plumbing.

Until next week, keep shipping! 🚀

- Brian

Follow me on X: @idomyowntricks