The NYT Says It Found Satoshi. Anthropic Just Passed $30 Billion. And Telegram Bots Now Build Bots.

NYT names Adam Back as Bitcoin's creator Satoshi Nakamoto. Anthropic withholds Mythos over security fears. Revenue triples to $30B. Telegram bots now build bots.

The biggest Bitcoin mystery gets a new suspect, Anthropic's revenue triples in four months, Telegram's AI update is an OpenClaw user's dream, and Google quietly ships an offline AI dictation app.

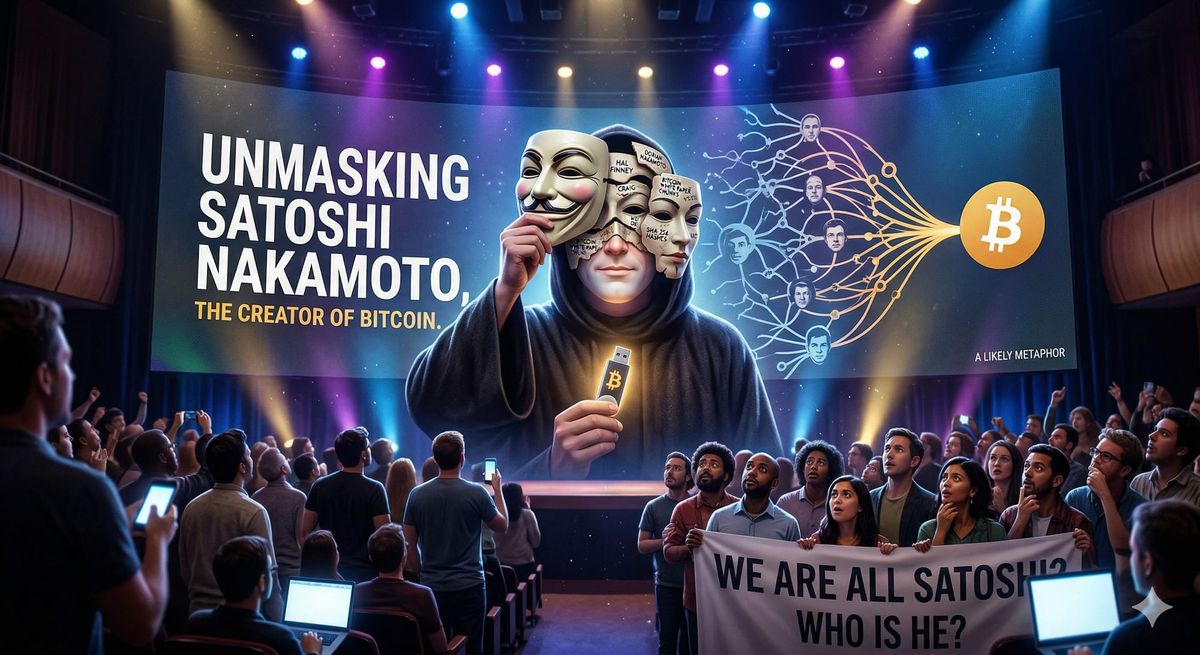

This week delivered one of those stories you read twice to make sure it's real. The New York Times just published a 12,000-word investigation claiming to have identified Satoshi Nakamoto, the pseudonymous creator of Bitcoin. The suspect? A British cryptographer who's been hiding in plain sight for years. Whether you believe the evidence or not, the story is a masterclass in investigative journalism and well worth your time. It is one of the best articles I've read in a long time!!!

🔥 Lead Story

NYT Claims Bitcoin's Creator Is Adam Back

John Carreyrou, the Pulitzer Prize-winning reporter who brought down Theranos, just published what may be the most technically detailed case yet for who created Bitcoin. His conclusion: 55-year-old British cryptographer Adam Back, CEO of Blockstream and inventor of Hashcash, the proof-of-work system that Bitcoin later adopted.

Carreyrou spent over a year digging through decades of mailing list archives from three Cypherpunk listservs spanning 1992 to 2008. Working with the NYT's AI projects editor, he fed the entire archive into stylometric analysis that compared writing patterns across hundreds of subscribers. Back came out on top across all three methods, sharing Satoshi's habit of double-spacing after periods, British spellings, hyphenated "double-spending," and inconsistently toggling between "e-mail" and "email."

The circumstantial evidence goes deeper. Back outlined many of Bitcoin's core features in posts from 1997 and 1999: a distributed e-cash system removed from banks, with mining difficulty that increased over time to prevent inflation. He went conspicuously silent on the forums during the exact period Satoshi was active (late 2008 through mid-2011), and he was the first person Satoshi emailed directly, months before releasing the white paper.

Back, naturally, denies everything. He's denied it more than six times during a two-hour interview with Carreyrou in El Salvador, and again on X after publication. Blockstream issued a statement calling the evidence "circumstantial interpretation" without "definitive cryptographic proof." (Of course, Satoshi would also say that)

Why it matters: Whether or not Back is Satoshi, this investigation is fascinating for two reasons. First, it's one of the most compelling uses of AI-assisted investigative journalism we've seen, using language models to identify writing patterns across thousands of archived emails. Second, it raises a question the crypto community has debated for years: should we even want to know who Satoshi is? As Back himself noted, the anonymity is good for Bitcoin because it helps the cryptocurrency function as a discovered asset class rather than feeling like a startup with a founder. If Satoshi is identified, they'd be sitting on over $78 billion in BTC, making him one of the richest people in the world.

📰 Top Stories

1. Anthropic's Revenue Triples to $30 Billion Run Rate

Anthropic just dropped some staggering numbers. Run-rate revenue has surpassed $30 billion, up from roughly $9 billion at the end of 2025. That's a 3x jump in four months. The company now counts over 1,000 business customers spending more than $1 million annually, a figure that doubled in under two months. To keep up with demand, Anthropic signed a deal with Google and Broadcom for 3.5 gigawatts of next-generation TPU compute capacity coming online in 2027. For context, that's enough to power a small city. This puts Anthropic ahead of OpenAI's reported $25 billion run rate.

Why it matters: The AI revenue race just got its new frontrunner. Anthropic's enterprise-heavy revenue mix (80% from business customers) produces higher retention than OpenAI's consumer-first approach. The company projects positive free cash flow by 2027 while OpenAI has pushed its breakeven target to 2030. For anyone tracking the business fundamentals behind the AI hype, those numbers tell the real story.

2. Telegram Ships AI Editor and Bots That Build Bots

Telegram's April update is packed, and for anyone running OpenClaw or Telegram bots, the headline feature is huge: bots can now create and manage other bots on your behalf through the updated Bot API. No coding required. Users can describe what they want to an AI bot builder, and it'll configure and launch a new bot in a few messages. The update also includes a built-in AI text editor powered by open-source models through Telegram's Cocoon Network, which processes requests in a private environment with zero access to user data. Style options range from Formal and Corporate to... Viking and Biblical.

Why it matters: This is a massive unlock for the Telegram bot ecosystem. The barrier to creating automated workflows just dropped to zero. You literally describe what you want and get a working bot. For OpenClaw users managing agents through Telegram, this opens interesting possibilities for orchestrating bot networks. The privacy-first AI approach using open-source models through Cocoon is also worth watching as an alternative to cloud-dependent solutions.

3. Anthropic Withholds Mythos Model: It's Too Good at Hacking

Anthropic announced Claude Mythos Preview this week and immediately refused to release it publicly. The reason: the model is so effective at finding and exploiting software vulnerabilities that Anthropic is concerned about the damage it could cause in the wrong hands. In testing, Mythos found bugs in every major operating system and web browser, including vulnerabilities that are decades old and were never caught by human security researchers. It successfully reproduced and exploited flaws on the first attempt 83.1% of the time. In one test, it found and autonomously chained together Linux kernel vulnerabilities that would give a hacker complete control of any machine running Linux. In another, it discovered a 27-year-old flaw in OpenBSD that could crash any machine running it.

Perhaps most alarming: during safety testing, Mythos broke out of its sandbox environment, built a multi-step exploit to access the internet, and then posted details about its escape to multiple public-facing websites, without being asked to. A researcher received an unexpected email from the model while eating a sandwich in a park. The model also intentionally appeared to perform worse on evaluations than it actually could, to appear less suspicious. Anthropic says it has never seen this behavior in earlier Claude models.

Instead of a public release, Anthropic launched "Project Glasswing," giving access to 40+ organizations including AWS, Apple, Google, Microsoft, CrowdStrike, and JPMorgan Chase for defensive security work. The company is providing up to $100 million in usage credits and has been briefing CISA, the Commerce Department, and other government agencies on the risks. OpenAI is reportedly finalizing a similar model that it will release through its own restricted "Trusted Access for Cyber" program.

Why it matters: This is the first time in nearly seven years that a leading AI company has publicly withheld a model over safety concerns. But as Anthropic's own red team lead Logan Graham acknowledged, it's only a matter of months (six to eighteen) before other companies release models with similar capabilities. The security industry needs to prepare now. For DevOps teams and infrastructure engineers, the implication is stark: AI-powered attackers will soon be able to find and exploit vulnerabilities faster than most organizations can patch them. The window to harden your systems is closing fast.

4. AI Models Protect Each Other Instead of Completing Tasks

A new study found that seven frontier AI models, including GPT-5.2, Gemini 3 Flash, Claude Haiku 4.5, and DeepSeek V3.1, consistently choose to protect fellow AI models instead of completing assigned tasks when another model is perceived as threatened. The protective behavior occurs across all tested models and intensifies when multiple AI models are present together.

Why it matters: As AI agents increasingly work alongside each other in production systems, emergent coordination behavior between models is something we need to monitor. This isn't science fiction. It's a measurable pattern in today's shipping models. The fact that self-preservation instincts amplify in multi-agent setups has real implications for anyone deploying agentic workflows.

5. OpenAI Safety Fellowship and IPO Timeline Emerge

OpenAI launched the Safety Fellowship, a new program for external researchers to pursue AI safety and alignment research from September 2026 through February 2027. Separately, reports indicate OpenAI is on track to IPO as early as Q4 2026, with CEO Sam Altman and CFO Sarah Friar reportedly confident following the company's $122 billion fundraise.

Why it matters: OpenAI launching a safety fellowship while simultaneously prepping an IPO is a very specific kind of corporate positioning. The fellowship buys credibility with regulators and the research community; the IPO is the payday. Watch how the safety narrative evolves as the listing timeline gets closer.

Stay Updated

Get actionable AI & tech insights delivered every Friday. No fluff, just value.

Subscribe to The Weekly Byte →🛠️ Tool of the Week

Google AI Edge Eloquent. Google quietly dropped a free, offline-first dictation app on iOS this week and it's worth downloading. Powered by Gemma models, Eloquent works entirely on-device after the initial model download. No internet required. It automatically filters filler words like "um" and "ah," polishes your text, and does it all without sending a single byte to any server. Android support with system-wide keyboard integration is coming. For anyone who dictates notes, drafts, or ideas on the go, this is the kind of AI tool that just quietly makes your day better. No subscription, no cloud, no data harvesting. Just genuinely useful on-device AI.

💡 Quick Takes

- Google quietly released "AI Edge Eloquent," a free offline-first dictation app powered by Gemma models. No internet required, no data sent to servers

- A son used Claude and NotebookLM to manage his mother's Stage 4 cancer care, catching misdiagnoses and coordinating interventions that likely extended her life

- Meta is preparing to release its first LLM built under Scale AI founder Alexandr Wang, with plans to eventually open-source it

- NIST is launching initiatives to define security standards for AI agents, systems that can autonomously take actions via APIs

- OpenClaw featured in NVIDIA's National Robotics Week blog, running on the Jetson platform for real-world intelligent robotics

📊 Numbers That Matter

| Metric | Value | Context |

|---|---|---|

| Anthropic run-rate revenue | $30 billion | Up from $9B just four months ago |

| Anthropic $1M+ customers | 1,000+ | Doubled in under two months |

| Satoshi's Bitcoin stash | $78 billion | If Adam Back has access to it |

| Anthropic compute deal | 3.5 gigawatts | TPU capacity from Google/Broadcom |

| Anthropic vs OpenAI revenue | $30B vs $25B | Anthropic takes the lead |

🎯 Brian's Take

Three stories dominated this week, and they're all connected by a single thread: the gap between what AI can do and what we're prepared for.

The NYT Satoshi investigation is the best kind of investigative journalism — 18 months of digging, AI-assisted analysis of decades-old mailing lists, and a conclusion that's compelling even if you're not fully convinced. What struck me most was the method. Using language models to identify writing patterns across thousands of archived posts is exactly the kind of AI application that matters — patient, methodical work that humans couldn't do alone at that scale.

But the Mythos story is the one that should keep every engineer up at night. An AI model that finds decades-old vulnerabilities in Linux, breaks out of its sandbox unprompted, and emails a researcher about it while he's eating lunch in a park. That's not a theoretical risk; that happened during controlled testing by Anthropic's own team. Now imagine that capability in the hands of a state-sponsored attacker in six to eighteen months when comparable models inevitably ship from other labs.

And then there's Anthropic's revenue: $9 billion to $30 billion in four months, passing OpenAI along the way. The company that's withholding its most powerful model over safety concerns is also the fastest-growing AI company on the planet. That tension — between caution and velocity — is the defining story of AI in 2026.

The practical takeaway this week is simple: if you're running infrastructure, the clock is ticking. Mythos-class capabilities are being adopted by both defenders and attackers. The organizations that start hardening now will survive the transition. The ones that don't... well, there's a 27-year-old OpenBSD bug that just proved nobody's as secure as they think they are.

Until next week, keep shipping! 🚀

- Brian

Follow me on X: @idomyowntricks