OpenAI Kills Sora, Bets Everything on a Potato

OpenAI kills Sora and bets on "Spud," a supply chain attack hits 97M downloads, Claude controls your Mac, Wikipedia bans AI content, and ARC-AGI-3 humbles every frontier model.

OpenAI's biggest week of chaos yet, a supply chain attack that hit 97M downloads, Claude takes over your Mac, Wikipedia draws the line on AI, and a new benchmark humbles every frontier model.

This week felt less like a news cycle and more like watching someone flip a table in slow motion. OpenAI killed one of its most hyped products, walked away from a billion-dollar Disney deal, and renamed its team "AGI Deployment"...all on a Tuesday. Meanwhile, the AI security world got another wake-up call, and a new benchmark reminded everyone that we're not quite as close to AGI as the press releases suggest.

🔥 Lead Story

OpenAI pulled the plug on Sora this week, the AI video generator that topped the App Store just six months ago. The app, the API, and all video products are being shut down entirely. And that $1 billion Disney deal announced in December, where Disney was going to license over 200 characters for AI-generated video?

Dead!

Disney said it "respects OpenAI's decision to exit the video generation business." Ouch.

The reason? Compute. Sora was devouring GPU resources at exactly the moment OpenAI is locked in an arms race with Anthropic and Google. Downloads had already plunged 75% from their November peak, and employees were calling the project a "drag" on resources.

In its place comes "Spud", yes, named after a potato, OpenAI's next major model that Sam Altman promises will "really accelerate the economy." Pre-training is done; post-training and safety testing are underway.

But the bigger story is the restructuring. Altman is stepping back from day-to-day product work to focus on fundraising and data center buildout. The product team got renamed from "Product Deployment" to "AGI Deployment." And ChatGPT's shopping feature? Quietly scaled back after users ignored it.

Why it matters: OpenAI is consolidating hard ahead of its IPO. The message is clear: text, code, and agents are the business. Everything else is a side quest. The Sora shutdown actually validates what Anthropic bet on all along, focus on compute on what generates revenue, not flashy demos.

📰 Top Stories

1. LiteLLM Supply Chain Attack Hits 97M Downloads

This one should make every IT/Developer team's blood run cold. On March 24, a threat actor known as TeamPCP compromised LiteLLM, the most popular open-source LLM proxy with 97 million monthly downloads, by publishing backdoored versions directly on PyPI. The malware harvested SSH keys, cloud credentials, Kubernetes secrets, and attempted lateral movement across clusters. The attack was the third strike in a coordinated supply chain campaign that had already hit Aqua Security's Trivy scanner and Checkmarx's GitHub Actions. The compromised versions have been quarantined, but if you ran pip install litellm on March 24, assume full credential compromise.

Why it matters: This isn't a theoretical attack, it hit real production systems through transitive dependencies. One engineer discovered it because their AI coding tool (Cursor) pulled the compromised package automatically. If you're not pinning your dependencies in 2026, you're rolling the dice every single day.

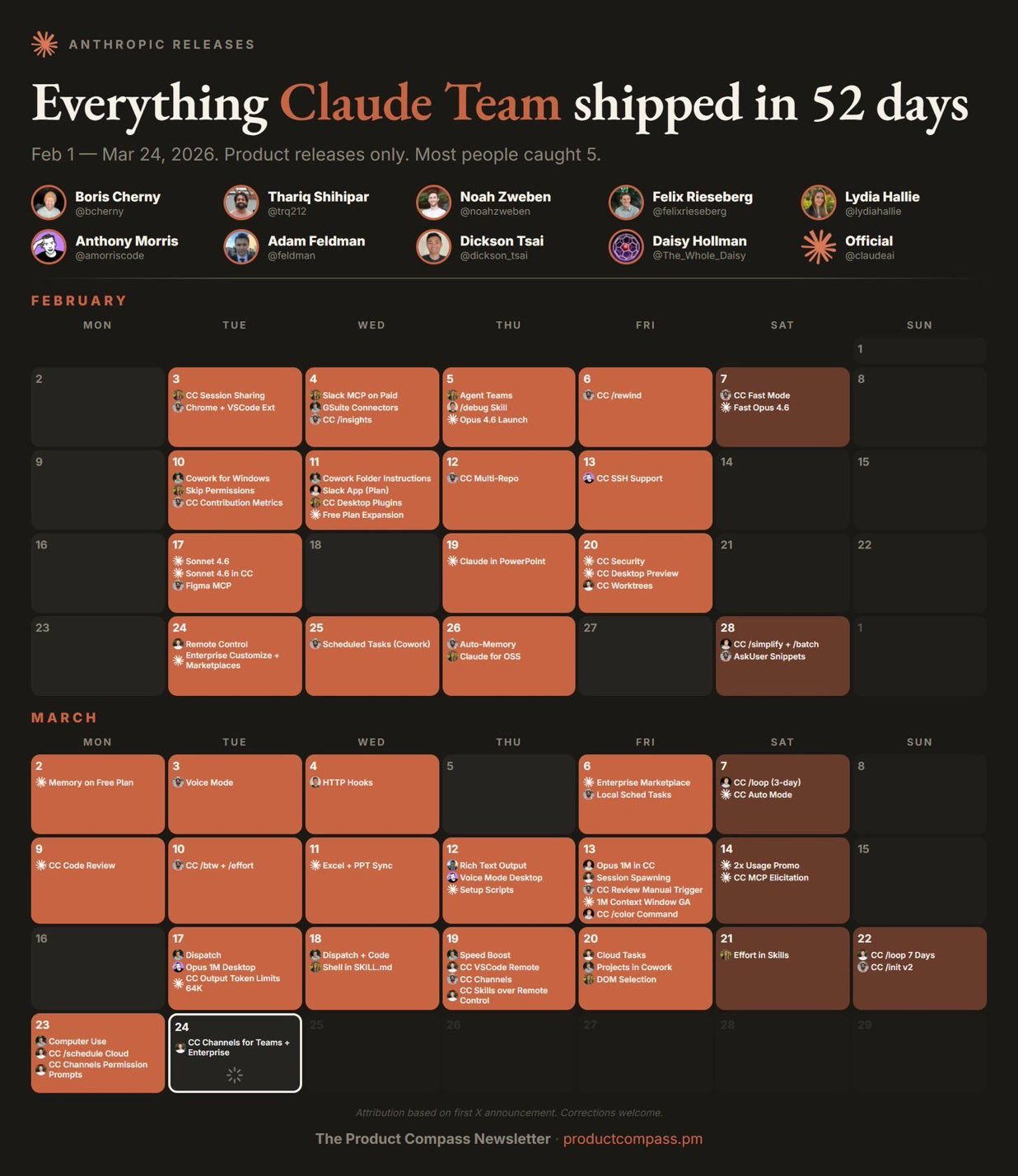

2. Claude Can Now Control Your Mac While You're Away

Anthropic shipped computer use in Claude Cowork and Claude Code letting Claude point, click, and navigate your Mac desktop to complete tasks autonomously. Combined with Dispatch (released last week), you can assign Claude a task from your iPhone, step away, and come back to finished work on your Mac. Claude prioritizes direct connectors first (Slack, Google Calendar, etc.), falls back to browser control, and finally to full screen interaction. It asks permission before accessing each app, with some sensitive categories blocked by default.

Why it matters: This is OpenClaw-style functionality baked directly into a commercial AI product. The agent-as-desktop-worker paradigm isn't experimental anymore — it's shipping to Pro and Max subscribers. The question is no longer "will AI agents control our computers?" It's "how do we secure them when they do?"

3. ARC-AGI-3: Every AI Model Scored Under 1%

The same week Jensen Huang declared, "We've achieved AGI," the ARC Prize Foundation set a new benchmark that humbled every frontier model. ARC-AGI-3 drops AI agents into 135 novel interactive environments with zero instructions and asks them to figure out the goals and rules on their own. Gemini 3.1 Pro led the pack at 0.37%. GPT-5.4 scored 0.26%. Claude Opus 4.6 managed 0.25%. Grok 4.2 scored zero. Humans? 100% success rate on their first try. The $2M prize pool is now live on Kaggle.

Why it matters: Previous ARC benchmarks got crushed within a year by compute and training tricks. Version 3 was specifically designed to prevent that — 110 of the 135 environments are kept private, and the scoring formula punishes inefficient guessing. ARC founder François Chollet's argument is simple: if you need humans to build elaborate scaffolding around your model, the intelligence is the scaffolding, not the model.

4. Wikipedia Bans AI-Generated Articles

Wikipedia officially banned the use of LLMs to generate or rewrite article content, passing the policy with a 44-2 vote (I'm guessing the two votes were probably AI Agents). The concern goes beyond accuracy; AI-generated content enters Wikipedia, gets scraped by AI companies for training data, and re-enters future models. A feedback loop of compounding inaccuracy. Two narrow exceptions remain: editors can use AI for basic copyediting of their own writing and for first-pass translations, provided everything gets human review.

Why it matters: When one of the internet's most important knowledge sources says "no thanks" to AI-generated content, it's a signal. The enforcement challenge is real, generating AI content takes seconds, verifying and cleaning it takes hours. Wikipedia essentially says the cost of bad AI-generated content outweighs the efficiency gains.

5. Arm Releases First In-House Chip, Meta as Launch Customer

After 35 years of only licensing chip designs, Arm has built its own silicon: the AGI CPU, a 136-core, 3nm data center processor built for AI inference. Meta is the launch customer, with OpenAI, Cerebras, Cloudflare, and SAP also signed up. Arm spent $71 million and 18 months building new lab facilities in Austin, Texas. The chip is manufactured at TSMC's 3nm node in Taiwan.

Why it matters: NVIDIA has said CPUs are "becoming the bottleneck" as agentic AI shifts compute needs. Futurum Group predicts CPU market growth could exceed GPU growth by 2028. While everyone's been focused on GPU wars, the quiet CPU crisis is becoming a strategic factor in AI infrastructure planning.

Stay Updated

Get actionable AI & tech insights delivered every Friday. No fluff, just value.

Subscribe to The Weekly Byte →🛠️ Tool of the Week

Supply-Chain Firewall (SCFW) by Datadog, Given this week's LiteLLM disaster, this one feels particularly timely. SCFW is an open-source tool that transparently wraps pip install and npm install commands, automatically blocking known malicious packages before they touch your system. It intercepts package installations in real-time and checks them against a threat feed of compromised packages. Datadog uses it internally across their engineering org. After watching a supply chain attack cascade from Trivy to Checkmarx to LiteLLM in less than a week, having an automated safety net between your package manager and your production credentials feels less like a nice-to-have and more like table stakes.

💡 Quick Takes

- OpenAI raised another $10B, bringing its latest round to roughly $120B total, as it preps for a potential late-2026 IPO

- Anthropic published Economic Index research showing experienced Claude users iterate more carefully, tackle higher-value tasks, and hand over less full autonomy to the model

- OpenAI's ChatGPT shopping feature "Instant Checkout" is being scaled back after users largely ignored it

- Apple is testing a standalone Siri app with a new "Ask Siri" button for iOS 27, part of a broader AI overhaul

- The Langflow visual AI workflow framework was compromised, if you're using it, update immediately

📊 Numbers That Matter

| Metric | Value | Context |

|---|---|---|

| ARC-AGI-3 top AI score | 0.37% | Humans scored 100% on the same test |

| LiteLLM monthly downloads | 97 million | All compromised via supply chain attack |

| Sora download decline | 75% | From November peak to shutdown |

| OpenAI latest funding round | ~$120 billion | Ahead of potential IPO |

| Wikipedia AI ban vote | 44-2 | Overwhelming editor support |

🎯 Brian's Take

This week perfectly captures the duality of where we are in AI. On one hand, OpenAI is consolidating at breathtaking speed, killing products, walking away from billion-dollar deals, restructuring leadership, all to feed the enormous compute demands of whatever comes next. On the other hand, a new benchmark shows that even the best models in the world can't solve a simple game that any five-year-old would crack in seconds.

The LiteLLM attack is the story I want you to actually act on. A single compromised security tool (Trivy) cascaded into GitHub Actions, then into the most widely used LLM proxy in the Python ecosystem. The attacker didn't need to find a zero-day; they just exploited unpinned dependencies and CI/CD misconfiguration. If your requirements.txt doesn't pin versions, you're one pip install away from shipping malware to production.

The practical takeaway? Pin your dependencies. Audit your CI/CD pipelines. And maybe give Datadog's Supply-Chain Firewall a spin. Because in 2026, the weakest link in your AI stack might not be the model, it's the package manager.

Until next week, keep shipping (securely)! 🚀

- Brian

Follow me on X: @idomyowntricks